“So? He seems like a nice fellow.”

Why AI art won’t work.

My most Luddite opinion (tough competition): AI art won’t work. Not even if the models get better. Beauty isn’t enough.

No one talks about art without talking about who made it. It’s an essential part of the thing. We connect with it because we know someone made it. It matters who Van Gogh is. We put ourselves in the artist’s shoes, or they repulse us. We react. You can feel what they do and identify with it, or be stupefied by an experience so alien to your own… If you know it’s a machine, the whole thing falls apart.

There’s a genre of pro-AI Art take. The writer points out that people can’t tell the difference between AI-generated images and paintings by human artists, but the test, like the Turing Test, misses the point. It’s not whether people can be fooled that matters, but what happens when they know the truth. We’ll know the robots are true intelligences when you can tell a human being that they’ve been talking to a robot, and they respond, “So? He seems like a nice fellow.”

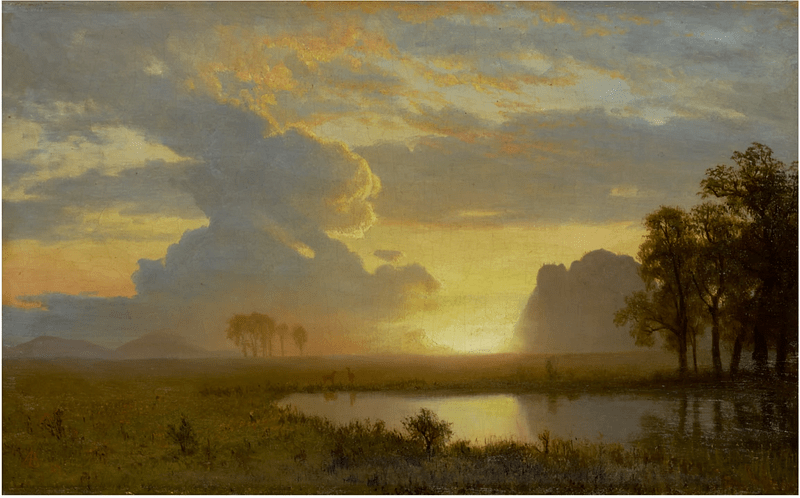

If you show folks two pictures and tell them AI made one and a human artist made the other, they’ll choose the human one — and mean it. It’s not just loyalty to carbon over silicon. It’s a sense of connection. They know that any artistic insight generated via AI comes from endless training data and some opaque process of aggregating that information, not from a particularly foggy morning or falling in love.

It’s an uncanny valley: the models produce something that looks like art, but it has no biography, no connection, and look, frankly, the robots have never smoked clove cigarettes or said anything unbearably pretentious but also sort of true, so I don’t think they’ll make it in the art world.

Zach

LinkedIn: https://linkedin.com/in/zlflynn

Website: https://zflynn.com

Udemy course: https://www.udemy.com/course/identifying-causal-effects-for-data-scientists/