“It Takes Too Long To Experiment”

But this is wrong!

If you’ve ever worked in an area of data science bordering on experimentation or if you’ve worked in Product, Marketing, etc., in an environment where experiments are possible, you’ve probably heard (or even said — it’s okay, this is a safe space) that ideally we’d run an experiment here, but we need to “move fast.”

I’m going to make the case that there isn’t a tradeoff. Experimentation maximizes velocity.

Admittedly, there is a tradeoff between Experimentation and Speed, but Speed is not Velocity!

Speed is how fast you’re moving, but Velocity has a direction. A sign. A destination you’re drifting towards.

If you don’t care whether you’re moving in the right direction, then sure, you don’t need to run experiments — or do any other analysis for that matter. Just move.

That’s not what we want to do.

“Never confuse movement for action.”

From a letter Hemingway allegedly wrote to Marlene Dietrich, probably not about A/B testing. Likely a quote of a quote. Unoriginal. An old proverb. A truism. The point is it’s a saying that I found on the Internet that made the point I wanted to make.

So, what you’ll hear people propose is: “let’s just launch it and see how it looks [as if it were easy!] after a few weeks.”

Now, if things go exceptionally well or exceptionally poorly, you probably will be able to just “see how it looks”. Sometimes, “sciency” folks get a little picky here, but if metrics suddenly jump up or down an enormous amount and stay consistently at those new levels for weeks, and the timing happens to line up with exactly when you launched the thing…

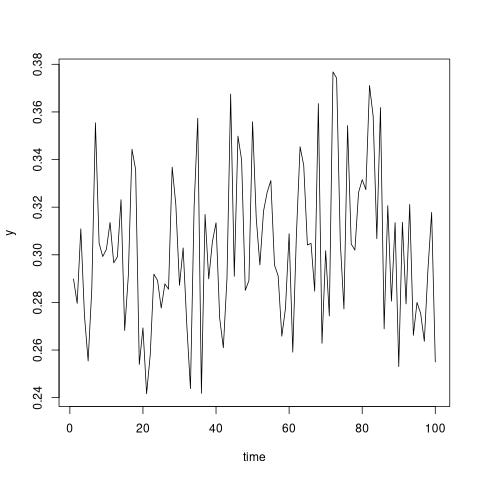

But 99.9% of product changes (conservatively) are not like this. They slightly improve conversion rates. They don’t cause massive jumps in time series. Product improvements shift company metrics by less than their normal day-to-day variation. For example, here is a made-up time series where there is a 2% average improvement in the metric at some point. That’s a big win! Can you spot the improvement in the series?

Another issue with analyzing just the time series: companies make many product and marketing changes at the same time, so how are we going to distinguish which change is responsible for improving metrics, and which is holding us back?

So, what’s the alternative to running an experiment if:

We’re maximizing velocity, not speed. We want to know that we’re moving in the right direction.

We’re not massively altering our product. So, we’re not expecting metric movements of +/- 20%.

We’re changing many product features at the same time.

The obvious candidate is a causal inference method based on observational data, but let’s think about how that would work in practice.

While there’s an enormous variety of causal inference methods (learn some of them in my Udemy course — look, I’m learning how to plug things!), they all share a similar structure:

There is a time before customers were treated with the new experience, and we’ll assume we can use that to establish a baseline for the treated population.

There is a set of customers who do not receive the experience at any time, so we can use changes in their experience over time to differentiate between changes from all the other things going on at the company — or in the broader economy/industry— from the actual product change.

So, it’s just another method of holding some people out of treatment — except that the resulting analysis is much less credible and depends on a bevy of assumptions. We have to wait to see results anyway. If the results look bad, there’s always a discussion of whether it’s really bad or if the method is bad…

Why bother, if you have the choice? Go with the finest and loveliest method, the original. The experiment doesn’t have to be 50–50, A vs B. Even a small “A” group protects against the worst outcomes, which are always more likely than you think.

Another way to think about it: why would the best allocation for the control group be 0%? Why not something else, just a little more?

Thanks for reading!

Zach

Connect at: https://linkedin.com/in/zlflynn

Check out my Udemy course on causal inference: https://www.udemy.com/course/identifying-causal-effects-for-data-scientists/?couponCode=A105FEABA0A750B7BB41

If you want my help with any Experimentation, Analytics, etc. problem, click here.